There’s a specific moment when a tool stops feeling like a tool. When you stop thinking about how to use it and start just using it. That moment is happening right now with AI — and it’s more interesting, and more complicated, than most coverage suggests.

Think about the last time you consciously thought about using Google.

Not what you searched for — the act of searching itself. The deliberate decision to open a browser, navigate to a search engine, and type a query. At some point in the early 2000s, that was a conscious choice you made. Today it’s muscle memory. You don’t “use Google.” You just look things up. The tool dissolved into the habit.

Something similar is starting to happen with AI — and it’s happening faster than most people realise, because it doesn’t feel like a technology shift. It feels like a change in how you think.

This is an essay about that change. What’s driving it, what it actually feels like when it happens, where it breaks down, and what it means for the roughly 35% of people who — according to current usage data — now use AI tools every single day.

The Numbers Behind the Habit

Before we get into what this feels like, it’s worth anchoring the conversation in what’s actually happening at scale.

A 2026 survey found that 35.5% of people now use AI tools every day, and 84.6% report that their usage has grown over the past twelve months. Pew Research Center, as of March 2026, found that roughly two-thirds of American teenagers use AI chatbots regularly — and that for a meaningful portion of them, it is the first place they go to learn something new.

In professional contexts, 84% of developers use or plan to use AI tools, with 51% already using them daily. Microsoft Copilot users save an average of 26 minutes per day on routine tasks — which sounds modest until you do the math: 26 minutes a day is roughly two full working weeks saved per year, per person.

Sixty percent of US adults are now using AI in some form. A year ago, “AI usage” largely meant talking to a chatbot. Now it means something diffuse and embedded: AI-assisted search, AI-written email suggestions, AI-curated content feeds, AI-generated meeting summaries, AI draft edits. Most of it invisible. Most of it not labelled “AI” at the moment of use.

That invisibility is the tell. When you stop noticing the technology, it’s no longer a tool. It’s infrastructure.

“The line between ‘using technology’ and ‘using AI’ is blurring fast, and in 2026, it’s almost impossible to separate the two.”

What Actually Changes When AI Becomes a Daily Habit

There’s a quality difference between using AI once a week and using it every day that doesn’t get talked about enough.

When you use AI occasionally, you treat each session as a fresh start. You explain your context from scratch. You describe your preferences. You correct the same tendencies you corrected last time. You get a useful output, use it, and move on. It’s transactional. The AI is a vending machine: useful, impersonal, and completely indifferent to your history.

When you use AI every day, something different starts to happen — particularly now that memory is becoming standard. The system begins to hold context across sessions. It learns that you prefer bullet points over prose in status updates. That you’re working on a Series A fundraise and need financial language to be precise. That when you say “tighten this up,” you mean reduce by about 30% and cut the hedging language, not just make it slightly shorter.

This is what Perplexity described when it launched its memory feature: rather than treating your history as training data, it retrieves relevant context from your memory store and uses it directly in crafting answers. The difference between those two things — using your history as statistical input versus actually remembering what you told it — is the difference between a tool that happens to know things about you and one that functions as a genuine working context.

ChatGPT’s memory, Claude’s project-scoped memory, Notion AI’s workspace context — these are all attempts to solve the same problem: the stateless chatbot is useful, but the persistent, context-aware assistant is transformative. And the industry has largely converged on the view that memory is the critical feature that turns AI from something you use into something you work with.

The Three Stages of How This Shift Actually Happens

In our observation — and based on what consistent users report — the shift from “AI as tool” to “AI as daily partner” tends to happen in three recognisable stages. They’re not formal or clean, but they’re consistent.

Stage One: Utility. This is where most new users start. You have a specific task. You try AI to help with it. It saves you time. You use it again for a similar task. You’ve found a tool that works. At this stage, AI is like a very fast calculator — you reach for it when you need it, and you put it down when you don’t.

Stage Two: Integration. At some point, you stop asking “should I use AI for this?” and start asking “how should I approach this?” The distinction matters. When you’re in integration mode, AI is part of your workflow architecture rather than an optional add-on. You draft faster because you know you’ll refine with AI. You take rougher notes in meetings because you know you’ll clean them up. You start a document knowing it will be a collaboration. The AI is present in the process even before you open the app.

Stage Three: Partnership. This is the stage fewer people have reached, but more are moving toward. At this stage, you’re not just using AI to accelerate tasks — you’re genuinely thinking differently because the system is available. You explore ideas you would have dropped because developing them felt too labour-intensive. You interrogate assumptions that would previously have gone unchallenged because asking felt embarrassing. You draft, test, challenge, and iterate in a way that would have required a thought partner or a team to do alone. The AI isn’t replacing a tool. It’s replacing a conversation — one you previously couldn’t have, or couldn’t have often enough.

Most honest accounts of heavy AI users describe this third stage not as efficiency but as a change in the texture of thinking. More explored. More challenged. More confident, sometimes, and more uncertain at others — because the AI also surfaces things you hadn’t considered.

Where This Is Breaking Down — And Why It Matters

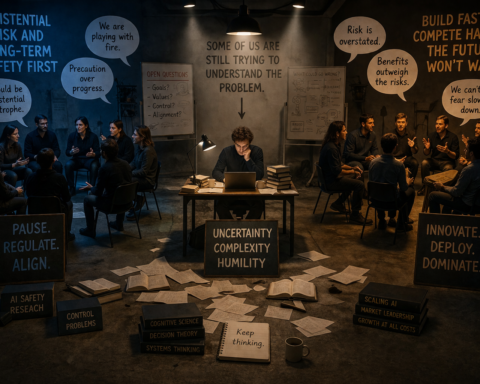

Any honest editorial on this topic has to acknowledge that the shift to “AI as daily partner” is not universal, not frictionless, and not working equally well for everyone.

The trust problem is real. Pew Research Center found in March 2026 that 70% of Americans say they have little or no trust in companies to use AI responsibly in their products. Half say the increased use of AI in daily life makes them feel more concerned than excited — a number that has risen consistently since 2021. These aren’t luddite reactions. They are rational responses to a technology that has demonstrated real failure modes: hallucinations on important facts, privacy concerns around what the system remembers and who can access it, and genuine uncertainty about how the data is used.

If the shift to “AI as daily partner” requires trusting a system with your work history, your communication style, your priorities, and increasingly your decisions — and 70% of people don’t trust the companies building those systems — that is a genuine obstacle, not a perception problem to be managed with better marketing.

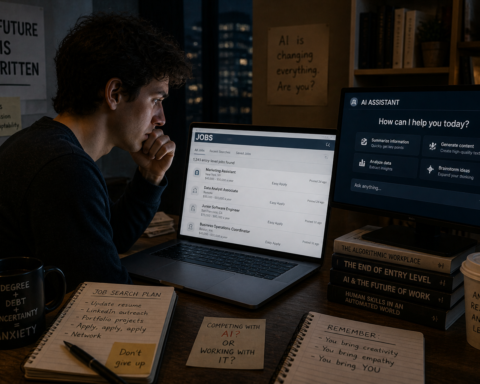

The skill gap is widening. Sixty-percent of US adults now use AI in some form. But using AI and using AI well are very different things. The people who’ve reached Stage Three of the partnership model — who’ve genuinely changed how they think by working alongside AI daily — represent a much smaller percentage. And the gap between what a sophisticated user can extract from these tools versus what a novice gets on a first attempt is large and growing. That gap is increasingly a professional skills gap with career implications, which is a more important story than most AI coverage gives it.

The habituation concern deserves a paragraph. There is a legitimate question — not a Luddite one — about what it means cognitively to outsource increasing amounts of intellectual labour to an AI system. Pew found that about half of Americans believe AI will worsen people’s ability to think creatively and form meaningful relationships. The evidence on this is genuinely mixed, and the honest answer is that nobody knows yet. What we can say is that a tool powerful enough to change how you think carries the same responsibility as anything that powerful: using it well requires intention, not just access.

The Practical Reality of What “Daily Partner” Looks Like in 2026

Let’s be concrete about what the partnership model actually looks like for people using it well right now, in 2026.

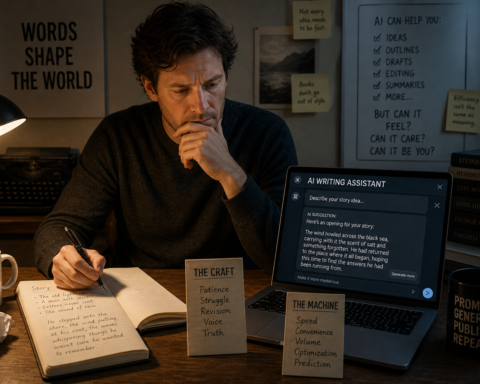

For writers and content creators, it looks like: drafting faster, editing with a second perspective, testing whether an argument holds by having AI steelman the opposite position, and using the saved time to go deeper on the ideas that matter rather than producing more volume.

For developers, it looks like: having a coding agent that works through problems while you sleep, catches context-spanning bugs you’d have missed, writes tests for the parts you hate testing, and explains decisions it makes so you understand the codebase it produces. 51% of developers already use AI tools daily. The ones who use them best aren’t the ones who trust the output blindly — they’re the ones who know exactly where to verify.

For analysts and researchers, it looks like: AI doing the first sweep of a literature, flagging the papers worth reading in depth, generating the hypothesis list from which the real thinking starts, and producing the preliminary data tables that frame the actual analysis. The researcher’s job is sharper, narrower, and more high-judgment than before.

For managers and leaders, it looks like: walking into meetings better prepared, having first drafts of communications that you actually send 60% of the time with minimal edits, and using AI to stress-test decisions before you make them — not to replace the judgment but to challenge it.

None of this is magic. In every case, the output is only as good as the direction given. The partnership model requires knowing what you want clearly enough to ask for it well, and knowing your domain well enough to evaluate what comes back. AI makes skilled people faster. It does not make unskilled people skilled.

Why “Partner” Is the Right Word — And Why It’s Also Misleading

The reason the word “partner” keeps appearing in this conversation — including in our headline — is that it captures something real about how the relationship changes at Stage Three. You’re not using a utility. You’re collaborating. There’s iteration. There’s judgment on both sides. There’s something that functions like trust, built over repeated interaction, calibrated through experience.

But “partner” also risks overstating something important. A partner has interests. A partner has accountability. A partner can push back in a way that costs something — to them, to the relationship. An AI system has none of these things in the way a human collaborator does. It is an extraordinarily sophisticated mirror and amplifier. It reflects your thinking back to you with speed and scale that changes what’s possible. But it doesn’t have skin in the game.

The most clear-eyed framing we’ve encountered comes from Cisco’s Aruna Ravichandran: “connected intelligence — where people, data, and digital workers work together side by side.” Not partners in the human sense. But not tools in the old sense either. Something genuinely new, for which the vocabulary is still being worked out.

What we know is this: the people treating AI as a disposable tool — something to reach for occasionally and put down when done — are operating with a fundamentally weaker capability than the people who have integrated it into how they work at the structural level. That gap is real, it’s measurable in time and output quality, and it is widening every quarter.

What This Means for You, Practically

If you’re reading this and you’re still in Stage One — using AI occasionally for specific tasks — there’s a simple experiment worth running.

Pick one recurring workflow. Something you do at least three times a week. Commit to involving AI in every single instance of that workflow for the next three weeks. Not to save time on the first attempt, but to build the working context that makes the collaboration meaningful. Brief it the same way each time. Correct it the same way each time. Let it learn the pattern of what you’re actually trying to produce.

At the end of three weeks, you’ll know whether the partnership model is real for your work — or whether AI genuinely isn’t the right tool for that particular thing. Both are valid outcomes. But you won’t know from occasional use. The value is in the accumulation.

The shift we’re describing isn’t inevitable. It requires intention. But for the people who’ve made it deliberately, the consistent report is not that AI has made their work easier. It’s that it has made their work different — in ways they didn’t anticipate and wouldn’t want to give back.

That’s what a tool becoming a partner actually feels like. And it’s worth paying attention to.