Everyone agrees there’s too much AI hype. But very few people are willing to say who’s generating it, why it keeps working, and what the actual cost is. This is that piece.

Let’s start by agreeing on something: the fact that AI hype exists is not news. Complaining about AI hype has itself become a genre of content. There are entire newsletters, YouTube channels, and LinkedIn personalities built around the “actually, AI is overhyped” take. It is, at this point, almost as predictable as the hype itself.

So this piece isn’t going to waste your time telling you that some AI announcements are exaggerated. You know that. What it’s going to do instead is something slightly different: it’s going to tell you who is doing it, why it keeps working even though everyone knows it’s happening, and what the actual, concrete cost is — because there is a real cost, and it’s being paid right now by real organizations making bad decisions based on bad information.

That’s the part most “AI hype is bad” articles skip. Let’s not skip it.

The Hype Isn’t Random. It Has an Org Chart.

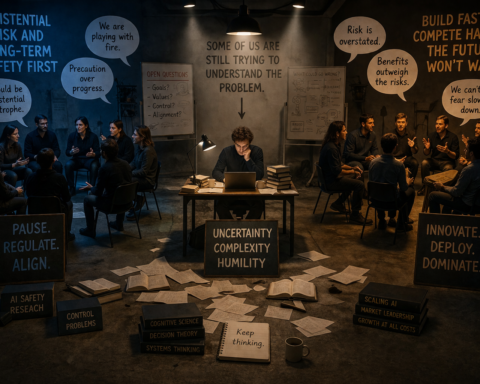

When we talk about AI hype, we tend to talk about it as though it’s atmospheric — some vague cultural excitement that floats around and makes people unreasonable. That’s not accurate. AI hype is produced by specific actors with specific incentives, and understanding who those actors are is the first step to not being taken in by them.

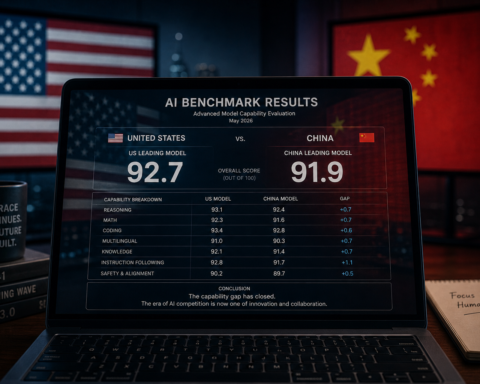

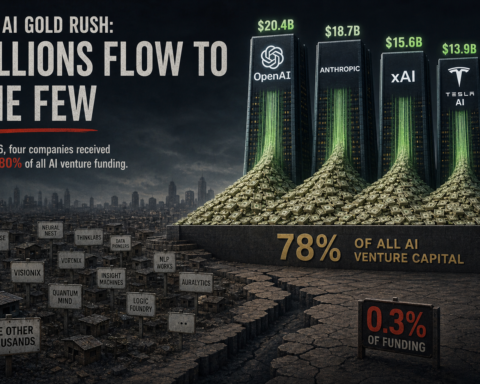

The labs are the most obvious source. OpenAI, Google DeepMind, Anthropic, Meta — these companies release models, and they also release the benchmarks on which those models are evaluated. When a company announces that its new model “outperforms human experts on 83% of knowledge work tasks,” that number is real — but it was measured on an evaluation the company helped design, under conditions the company selected, with results the company chose to publish. That’s not fraud. But it is a curated picture, and treating it as a complete picture is a mistake.

The enterprise software vendors are the next layer. Salesforce, Microsoft, ServiceNow, SAP — every major platform has rebranded large portions of its existing functionality as AI. Some of it genuinely is. A lot of it is machine learning that was already running under the hood, now sporting a chatbot interface and a larger price tag. The result is that “AI adoption” numbers look enormous even when the underlying capability change is modest.

The financial press amplifies everything. A funding round that would once have been reported as a boring Series B becomes a story about “the AI company that just raised $200 million to transform [insert industry].” The story needs a hook. AI is the hook. The more dramatic the claim in the press release, the more coverage it gets.

Consultants and analysts have their own incentive structure. Gartner’s Hype Cycle, for all its genuine usefulness, is itself a product that gets marketed. Predictions about AI transforming industries by 2028 justify the engagement needed to prepare for that transformation. Not cynically — most of these people genuinely believe what they’re saying. But belief and accuracy are different things.

None of this means the people involved are lying. It means the system has a consistent bias toward the dramatic, the unprecedented, and the imminent — because those are the things that generate attention, investment, and revenue. That bias compounds over time into a picture of AI that is systematically more impressive and more urgent than the evidence supports.

The Numbers That Should Make You Pause

Here’s where it gets uncomfortable for the hype machine.

Deloitte’s Tech Trends 2026 report — not an anti-AI document by any means — found that only 11% of companies have AI agents fully operational in production environments, despite 25% experimenting in pilots. Think about that ratio. For every company running agents in production, there are more than two running experiments that haven’t translated into anything real.

A February 2026 study by the National Bureau of Economic Research, covering 6,000 executives, found that over 90% of firms saw no measurable impact on employment from AI over the prior three years. Not because AI isn’t changing things — it is — but because the changes are slower, narrower, and more uneven than the “AI will transform everything” headline implies.

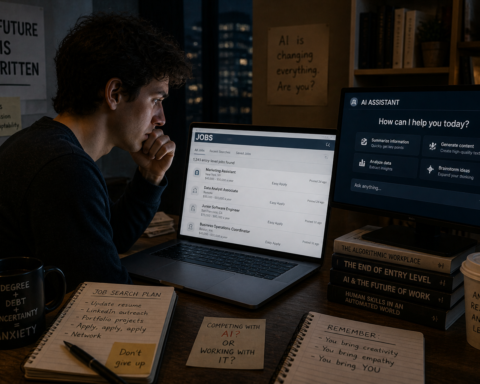

The World Economic Forum’s Future of Jobs Report 2026, released this month, projects a net positive job impact from AI over the next five years — a far more nuanced finding than either the “AI will eliminate all jobs” or the “AI creates more jobs than it destroys” camps tend to acknowledge. The actual picture involves significant displacement in specific roles alongside the creation of new ones, with the outcome heavily dependent on how aggressively organizations invest in reskilling.

IBM Watson Health is the case study nobody in the hype machine wants to talk about. In 2018, it was positioned as the future of AI in medicine — billions invested, major hospital partnerships, headlines everywhere. By 2025, it had delivered a textbook case of overpromised and underdelivered: clinical recommendation systems that failed to account for how doctors actually work, datasets that didn’t reflect the diversity of real patient populations, and a gap between what the marketing promised and what the technology could do that turned out to be unbridgeable at the pace implied.

Watson didn’t fail because AI in medicine is impossible. AI in medicine is now producing genuinely impressive results — early cancer detection, drug discovery, clinical trial optimization. Watson failed because the hype ran a decade ahead of what the technology could actually deliver at the time. That gap is expensive. And it plays out over and over, in different industries and different forms.

“AI fatigue is real. People are realizing that AI isn’t the flawless oracle they hoped for. It gets things wrong — sometimes spectacularly wrong.” — SmarterMSP, January 2026

AI Fatigue Is Real — And It’s Costing More Than Attention

There’s a new phenomenon that doesn’t get discussed nearly enough: AI fatigue. Not skepticism. Not thoughtful critical engagement. Genuine fatigue — the kind where someone hears “AI” and their eyes glaze over because they’ve been told too many times that this tool, this model, this platform is going to change everything, and it didn’t.

This matters practically. When organizations experience AI fatigue, they start making one of two equally bad decisions.

The first bad decision is overcorrection: they abandon AI initiatives entirely, write off the technology as marketing, and go back to how things were before — missing out on the genuine, real productivity gains that are available right now to organizations willing to use these tools well.

The second bad decision is the opposite: they lean so hard into AI adoption to prove they’re not behind that they deploy tools they don’t understand, into workflows that aren’t ready, with governance frameworks that don’t exist. They’re chasing the headline rather than solving a problem. This is how you get pilots that never scale, chatbots that frustrate customers, and “AI strategies” that amount to a PowerPoint deck and an API key.

The era of endless pilot programs, as IFS put it in their 2026 predictions, is ending. Boards and executives are now being asked to show ROI on AI investments that were approved based on hype-cycle logic. In many cases, that ROI isn’t there — not because AI doesn’t work, but because the use case was poorly chosen, the implementation was underinvested, and the expectations were set by a vendor demo rather than a realistic operational assessment.

That’s the bill coming due. And it’s a real bill.

The Specific Claims That Need to Stop

In the spirit of being direct, here are some AI claims that are either false, misleadingly framed, or true in such a narrow sense that they function as lies in the wild.

“AI will replace your entire job within 18 months.” Microsoft AI chief Mustafa Suleyman said in February 2026 that all white-collar work could be automated within 18 months. This is not a reasonable position. The NBER study of 6,000 executives found that over 90% of firms saw no measurable employment impact from AI over three years. The jobs most at risk are specific entry-level functions: data entry, basic financial analysis, compliance reporting, administrative coordination. Entire roles, especially those involving complex judgment, relationship management, and contextual creativity, are not going anywhere on that timeline.

“Our AI achieves human-expert performance.” This is technically true in specific, controlled benchmark conditions. In the real world, where prompts are messy, context is incomplete, and stakes are high, the picture is more complicated. The benchmarks are useful guides but not production performance guarantees.

“Companies using AI are seeing 10x productivity gains.” Some are. Specifically, some individuals at specific companies using specific tools for specific tasks have documented extraordinary efficiency improvements. Extrapolating that to company-wide or industry-wide productivity is a category error. The median organization using AI tools is seeing meaningful but modest gains — more like 20-40 minutes saved per user per day on relevant tasks, which is valuable but not transformative without deliberate organizational change alongside it.

“AI is coming for [your industry].” Every industry gets a version of this headline. Sometimes it’s true in important ways. More often, it describes the most automatable slice of the work in that industry, presented as though it describes the whole. Law is a good example: AI is genuinely changing document review, contract analysis, and legal research. It is not replacing the partner who understands the client’s politics, has relationships with opposing counsel, and exercises judgment on strategy.

What Responsible Coverage Actually Looks Like

Here’s where MEFAI stands, stated plainly.

AI is a genuinely powerful, genuinely important technology that is genuinely changing how work gets done in 2026. That’s not hype — it’s true. The Danfoss case, where AI agents cut customer response time from 42 hours to near real time. PepsiCo’s 20% throughput gains in manufacturing. The coding agent productivity data showing developers saving meaningful hours per week. These are real. They’re worth reporting, understanding, and learning from.

But the mechanism by which AI produces value is almost always specific, not general. It’s a particular agent handling a particular workflow. It’s a particular model proving out on a particular domain. It’s a particular team that invested in implementation, ran experiments, iterated, and built something that works. The generalization from “this worked here” to “AI will transform everything” is where the hype is introduced — and it’s introduced by people who benefit from it.

Responsible AI coverage means reporting the specifics, not just the headline. It means asking whose benchmark that is. It means following up on the launch announcement six months later to see if the deployment actually scaled. It means being willing to say “this didn’t work as claimed” when that’s what happened — even when the technology involved is impressive and the people building it are smart.

That standard is harder to meet than publishing the press release. It takes more research, more time, and more willingness to be unpopular with people who are trying to get you to cover their announcements favorably.

It is also, we think, the only kind of coverage worth reading.

The Honest Position on Where We Are

2026 is not a year of AI disappointment. The technology is genuinely advancing, and the advances are genuinely meaningful. GPT-5.4 performing at or above human experts on specific knowledge work tasks is real. AI-assisted drug discovery reaching clinical trials is real. Agentic workflows handling multi-day projects with minimal oversight is real.

But 2026 is also the year the bill is coming due on a decade of overclaiming. The organizations that based their AI strategies on the most ambitious headlines — rather than the most honest ones — are now being asked to show results they can’t produce. The workers who were told their jobs were imminently at risk spent two years in anxiety about a timeline that, for most of them, hasn’t materialized and may not for years. The investors who funded AI companies based on TAM projections derived from hype-cycle logic are beginning to ask harder questions.

TechCrunch put it well at the start of this year: if 2025 was the year AI got a vibe check, 2026 is the year the tech gets practical. The party isn’t over. But the industry is starting to sober up.

That sobriety is healthy. It’s what allows the technology to be used well. And used well is the only version of AI that actually matters.

We’d rather be the publication that helps you use it well than the one that made you excited about it for a quarter and left you disappointed.