Perplexity isn’t a better ChatGPT. It’s a different tool entirely — one that solves a specific problem better than anything else available. The question is whether that problem is one you have every day.

There’s a browser tab I keep open at all times now that I didn’t have a year ago. It’s Perplexity. Not because it replaced ChatGPT or Claude — I still use both of those — but because it replaced something specific that neither of those tools does well: fast, verifiable, cited research on real questions about the current state of the world.

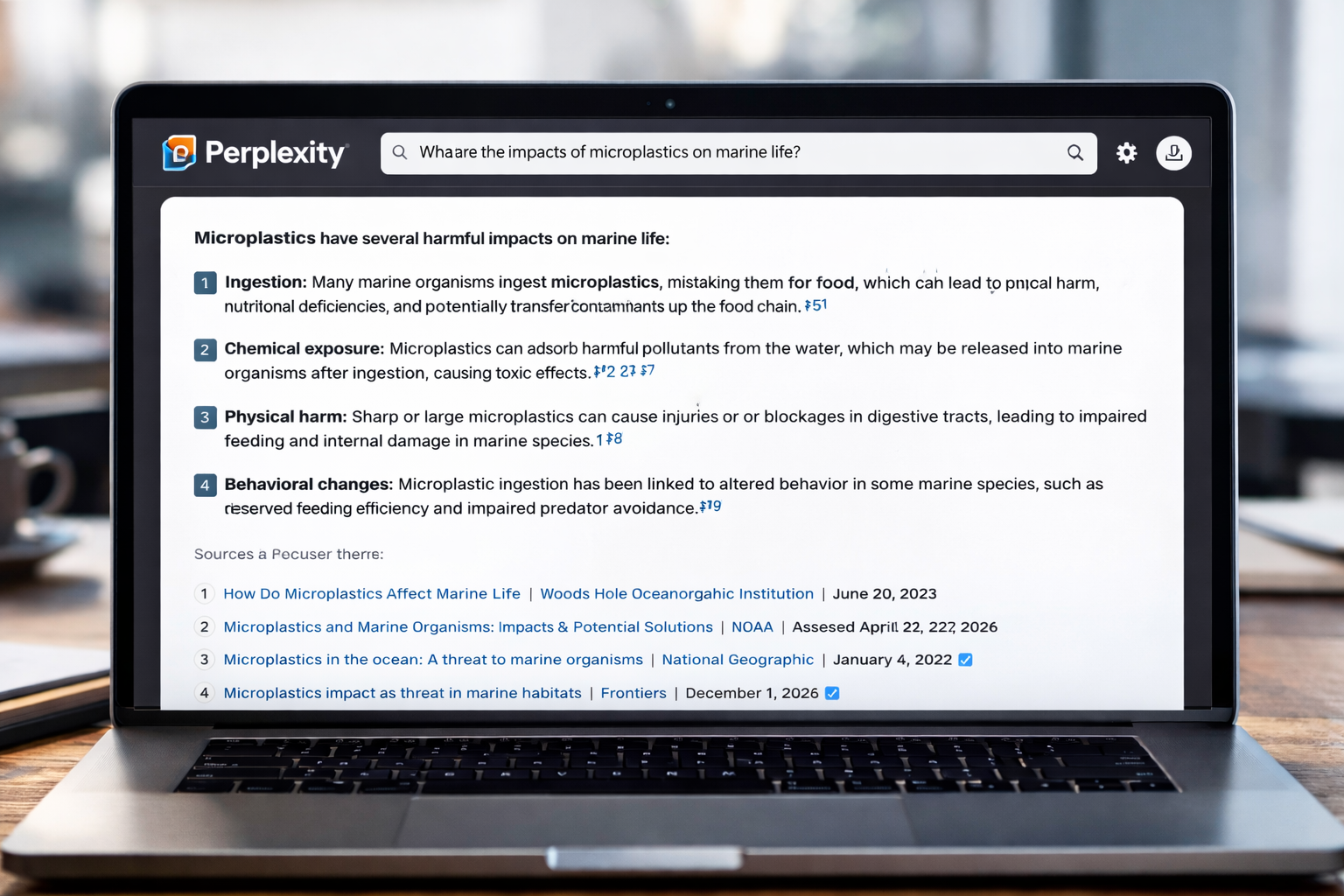

Let me be precise about what that means before we go further, because the specific framing matters. When I need to ask “what AI models does Salesforce currently use in their enterprise stack?” or “what are the current FDA requirements for AI-assisted diagnostic tools?” or “what did the Fed say about rates in the last 48 hours?” — ChatGPT and Claude are unreliable. They either produce confident-sounding answers that are outdated or hallucinated, or they tell me their knowledge has a cutoff. Perplexity goes to the web in real time, reads multiple sources, synthesises the answer, and shows me exactly where it got every claim.

That specific use case — current, cited, verifiable information — is where Perplexity is genuinely the best tool available. The question is how often that use case applies to your work.

This is the review from 30 days of daily use. Honest about what works, honest about what doesn’t.

What Perplexity Actually Is

Perplexity AI is an AI-powered answer engine that combines real-time web search with large language models. When you ask it a question, it doesn’t draw from a training dataset that has a cutoff date. It searches the web right now, reads the results, and synthesises them into a direct answer — with numbered citations attached to every claim, so you can click through to the original source and verify anything it says.

Think of it as a research assistant that reads the internet for you and then presents findings with receipts.

The company has grown significantly: from 230 million monthly queries in August 2024 to over 780 million by mid-2025 — a 239% jump in under a year. It reached a $20 billion valuation in early 2026 and crossed $200 million in annual recurring revenue. Its user base skews heavily professional: 80% are college graduates, 30% are senior company leaders, and 65% are high-income professionals. These aren’t casual users. They’re researchers, analysts, and executives who found that Perplexity saves meaningful time on information-intensive work.

Pricing: What You Get at Each Tier

Free tier: Standard search with limited Pro Searches per day. For casual research and quick information lookups, the free version handles most basic queries. Most people who try it keep the tab open alongside Google.

Pro ($20/month or $200/year): Unlimited Pro Searches (the multi-step research mode). Access to GPT-5.2, Claude Sonnet 4.6, and Gemini 3 Pro — model switching within a single interface. File uploads. Image generation (basic). Spaces for collaborative research. 300+ Pro Searches per day — more than enough for even intensive research workflows.

Enterprise Pro ($40/seat/month): Shared Spaces, admin controls, SOC 2 Type II compliance, zero data retention. For teams coordinating research across multiple people.

The honest value assessment at $20/month: Perplexity Pro sits at exactly the same price as ChatGPT Plus. The use cases barely overlap. ChatGPT Plus is better for writing, brainstorming, and reasoning tasks. Perplexity Pro is better for research, fact-checking, and any task where source verification matters. If you’re a daily AI user, these are complementary tools rather than competitors.

What I Actually Tested — And What I Found

Week 1-2: Research tasks

I threw complex, multi-source research questions at Perplexity daily: competitive landscape analysis for AI coding tools, background research on regulatory changes, fact-checking claims before publishing. The result was consistently impressive.

When I asked “what AI models does OpenAI currently offer and how does pricing compare to Anthropic?” — Perplexity returned a structured, cited answer pulling from OpenAI’s current pricing page and Anthropic’s documentation, with the comparison table I needed in under 60 seconds. The same query to ChatGPT returned information that was partially outdated and not sourced.

Pro Search — Perplexity’s multi-step research mode — is genuinely the standout feature. You submit a complex question, and instead of returning a single answer, Perplexity breaks it into sub-queries, runs multiple parallel searches, synthesises across sources, and returns a structured report. For the query “Analyse the competitive landscape of AI coding tools in 2026, including market share, pricing, and developer adoption trends” — the process took about 3 minutes and returned what would have been a half-day of manual research.

Citation accuracy data supports my experience: Perplexity outperforms ChatGPT on citation accuracy at 78% versus 62%, according to independent testing. That gap matters when the accuracy of your research affects decisions.

Week 3: Current information and news tracking

This is Perplexity’s clearest advantage over every other AI tool. AI product release cycles have accelerated dramatically in 2025-2026 — there are meaningful developments weekly. When I asked ChatGPT “what are the most significant AI product launches in the last two weeks?” it either hedged about knowledge cutoffs or produced outdated information. When I asked Perplexity the same question, it pulled live web results, synthesised them, and cited every source. I could verify each claim in under 30 seconds.

For anyone covering a fast-moving industry — AI, finance, biotech, policy, legal — this difference is not a small convenience. It’s the difference between a tool that’s useful for research and one that isn’t.

Week 4: Stress-testing the edges

I pushed Perplexity on topics where I knew the information was contested or nuanced. This is where the limitations became clear.

Perplexity can and does make mistakes. It occasionally misinterprets sources, presents contested claims as settled facts, or blends findings from different studies in misleading ways. Source quality varies: Perplexity cites a personal blog or marketing page with the same apparent authority as a peer-reviewed paper. You need to evaluate sources yourself — the citations are a starting point, not a guarantee.

For complex, multi-step logical reasoning — philosophical analysis, mathematical proofs, deep causal reasoning — Claude or GPT-5.4 in thinking mode are substantially stronger.

The Key Features Worth Understanding

Pro Search: Multi-step research mode that performs like a junior analyst — takes 30-90 seconds, runs multiple sub-queries, produces a more thorough response. Available in limited quantities on the free plan, unlimited on Pro.

Deep Research: Runs dozens of searches, reads through the results, and produces a detailed report with sources — similar to what a research assistant might produce in a few hours. For the most demanding research tasks, this is where Perplexity genuinely earns its price.

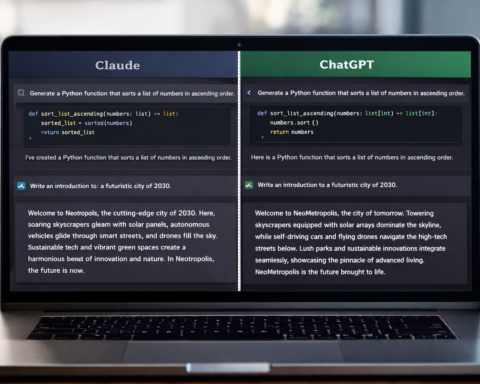

Model switching: Pro users can toggle between GPT-5.2, Claude Sonnet 4.6, Gemini 3 Pro, and Perplexity’s own Sonar models within a single interface. Useful for getting a second opinion on important findings or using each model where it’s strongest.

Spaces: Shared research workspaces that function like project folders. Teams can build persistent research libraries that Perplexity searches alongside the live web when answering questions. Pro users can upload 50 files per Space (50MB each).

Focus modes: Academic mode specifically searches scholarly databases — immediately more useful than generic web search for anyone checking whether a claim has scientific backing. Finance mode filters for financial data and 10-K filings. Hugely time-saving for sector-specific research.

Model Council (added February 2026): Lets you compare answers from multiple AI models simultaneously for important decisions. Getting the same question answered by three different models and comparing their reasoning is genuinely valuable for high-stakes research.

Where Perplexity Doesn’t Work

Creative and generative tasks. Perplexity is not a tool for writing essays, generating code, drafting marketing copy, or creative work. Other tools — Claude, ChatGPT — do these things far better.

Local and shopping search. Perplexity doesn’t do product comparisons, price tracking, or local business discovery the way Google does. Google remains unmatched here.

Complex multi-step reasoning. For nuanced analysis that requires extended logical chains, Claude or GPT-5.4 in thinking mode are stronger. Perplexity synthesises; it doesn’t deeply reason.

Organisation over time. Thread-based design works well for a single research session, but after a few weeks of heavy use, dozens of threads with no good way to resurface them becomes a friction point. The Spaces feature helps for organised research projects, but casual thread management remains weak.

The Honest Verdict

After 30 days of daily use: Perplexity Pro at $20/month earns its price specifically if your work regularly requires current, sourced information.

Pay the $20/month if: You publish anything that requires source verification. You track a fast-moving industry. You’re currently spending 30+ minutes per day on manual research and fact-checking. You’re a student or researcher who needs reliable sources quickly.

Skip it and use the free tier if: Your research needs are met by Google. You primarily need AI for content creation. You’re only getting started with AI tools and haven’t yet established a daily research workflow.

The workflow that emerged from 30 days: start every research session in Perplexity to establish what’s actually true and current, then move to Claude or ChatGPT for the writing and synthesis work. Perplexity finds; the other tools create. That division of labour is where the real value lives.

Rating: 4.5/5 — the best AI research tool available, with a clear caveat that accuracy verification remains your responsibility.